Role: UX Researcher & Designer | Timeline: 4 weeks + ongoing

Tools: Netnography, Figma, Anything.com | Status: Active sandbox project

Challenge: How do you apply health education principles and chronic illness support frameworks to digital product design, without reinventing evidence-based approaches that already work?

The Problem

People with Type 2 diabetes face constant decision-making demands around monitoring, medication, diet, and lifestyle. Existing self-management tools often increase cognitive load rather than reduce it. When users struggle to maintain “perfect” adherence, the tools themselves become sources of shame and disengagement.

This isn’t a new problem in health education, but it persists in digital health products.

Research Approach: Social Listening as Systematic Qualitative Research

To gather authentic user experiences within the project timeline, I used netnography: systematic analysis of naturally occurring online discussions in diabetes-focused communities on Reddit.

Why This Method

- Access to authentic, unprompted user experiences

- Captures emotional and psychological dimensions often underreported in clinical settings

- Identifies gaps between clinical guidelines and lived experience

- Ethical approach: public discussions, paraphrased quotes to protect privacy

Research Process

- Identified diabetes self-management subreddits with active discussion

- Analyzed first-person accounts of daily management experiences

- Conducted thematic analysis to identify patterns in pain points

- Synthesized insights into design requirements

Key Findings

| Theme | User Voice | Design Implication |

|---|---|---|

| Cognitive overload leads to avoidance | “I’m not checking it because I know it’s bad.” | Reduce decision-making burden, not just provide information |

| Tools don’t help interpret progress | “My goal was to get off all meds… it seems like I failed.” | Reframe “success” beyond clinical outcomes alone |

| Self-management experienced as moral judgment | Disruptions trigger self-blame and shame | Remove evaluative language and performance metrics |

Problem Statement: When tools fail to help users understand progress, self-care can feel like failure, turning health management into a source of shame rather than support, especially when life disruptions occur.

Translating Health Education Frameworks to UX Design

I drew on established chronic illness support principles rather than inventing new approaches:

- Capacity fluctuates (spoon theory, pacing) → Design for fluctuating capacity, not ideal behavior

- Recovery after setbacks is normal → Make progress understandable and recoverable

- Shame reduces engagement → Reduce moral judgment through language and interaction design

Critical design question: How can AI support decision-making without introducing new forms of surveillance or judgment?

Solution: Intentionally Minimal Intervention

Core Interactions

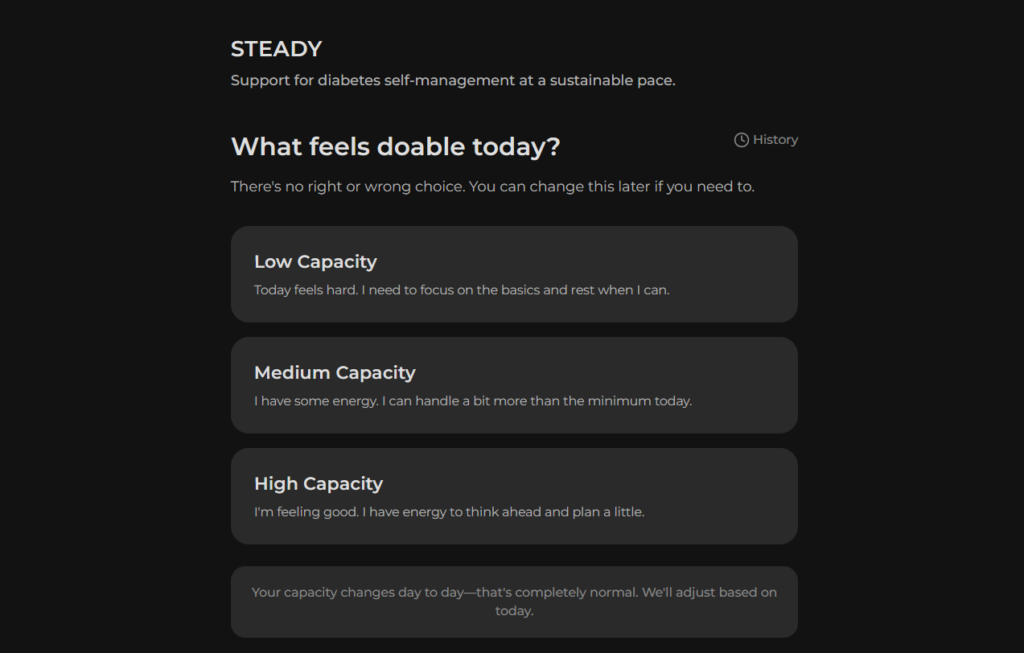

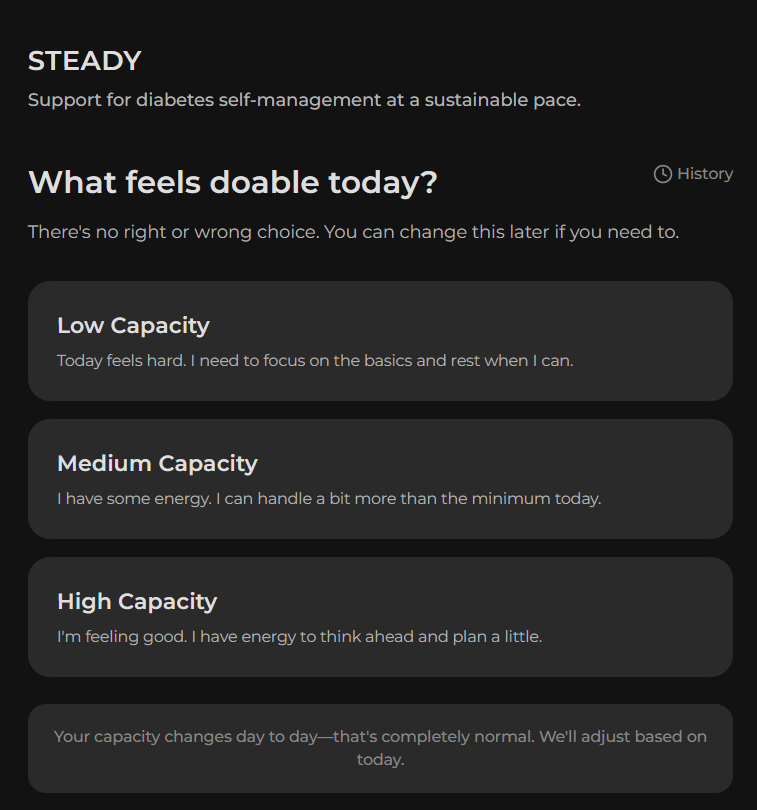

- Daily Capacity Check-in: Low/medium/high, acknowledging that energy and capability fluctuate

- Single AI-Supported Suggestion: One actionable focus matched to reported capacity (not a command, a suggestion)

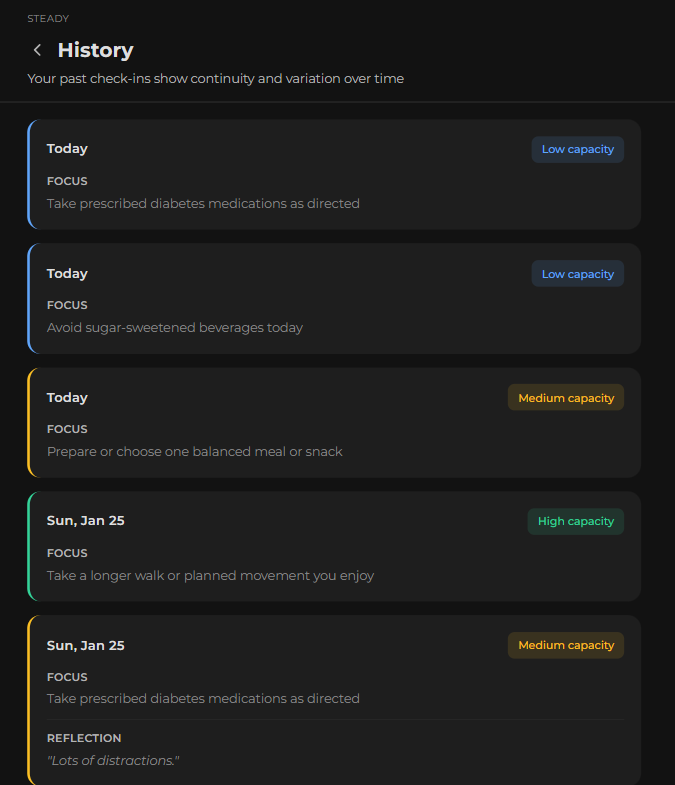

- Optional Non-Judgmental Reflection: “What helped / what got in the way,” framed as self-awareness, not data collection for external evaluation

Intentionally Excluded

- Metrics, streaks, gamification (create performance pressure)

- Push notifications/reminders (imply user can’t manage independently)

- Progress dashboards (invite comparison to impossible “perfect” adherence)

Every feature that typical health apps include was evaluated against the question: Does this reduce burden, or does it create new forms of judgment?

Usability Testing

Five participants (mix of classmates and people with T2D) tested the functional web app prototype through asynchronous mobile testing. Focus areas: clarity, tone, and emotional response.

What Validated the Approach

- Tone consistently described as “non-judgmental” and “supportive”

- Suggestions felt actionable, not generic motivational content

- Users reported feeling “capable” and “in control,” which is critical for self-management

Refinements From Testing

- “Focus didn’t translate as a suggestion” → Added explicit framing: “A suggestion based on today’s capacity”

- “I wasn’t sure what I was reflecting on” → Reframed to clarify user benefit

- “‘If you need to’ felt fragile” → Shifted to autonomy language: “Do what feels manageable”

- Visual inconsistency in History view → Unified design language across all screens

Ethical AI Considerations

The MVP uses a pre-defined suggestion set matched to capacity levels, with no actual AI yet. This is intentional: establish baseline interaction patterns first, understand what “helpful” looks like before automating, and avoid building bias into the system from the start.

Questions Guiding Future AI Integration

- Evidence grounding: How do you ensure AI suggestions align with clinical guidelines without becoming prescriptive?

- Guardrails: What suggestions should AI never make, even if they seem relevant?

- Transparency: How do you communicate AI’s role without creating distrust or over-reliance?

- Bias prevention: How do you avoid reinforcing existing health disparities in suggestion algorithms?

- User agency: How do you maintain user autonomy when AI “knows” their patterns?

These aren’t hypothetical; they are active design challenges as AI becomes more prevalent in health apps.

What This Project Demonstrated

Research Translation

Successfully applied chronic illness support frameworks from health education to digital product design.

Methodological Rigor

Used systematic qualitative research (netnography) to ground design decisions in real user experiences.

Evidence-Based Design

Every feature decision tied back to user research and health education principles.

Ethical AI Thinking

Identified critical questions about AI in healthcare before building the system.

Professional insight: The biggest challenge wasn’t learning UX methods; it was resisting the urge to add features. Health apps tend toward feature bloat because “more support = better,” but research showed the opposite: sometimes less intervention is more supportive.

Next Steps

- Evidence-based suggestion frameworks grounded in diabetes care guidelines

- AI guardrails and transparency mechanisms

- Long-term testing to understand engagement patterns beyond initial novelty

- Exploration of care team visibility without creating surveillance

Broader question: How do we build AI-assisted health tools that reduce burden rather than add to it, especially for people managing chronic conditions long-term?